A product team at a mid-size SaaS company spent five days in a design sprint. Workshops, sticky notes, a prototype, user testing on Friday. Everyone left feeling like they’d done something important. Six weeks later, nothing had shipped.

The insights were still in a Miro board nobody opened. That is the design sprint problem in one paragraph. The original design sprint, developed at Google Ventures, was built for product teams trying to answer a big, fuzzy question quickly. It’s genuinely useful for that. But most growth and CRO teams are not trying to answer fuzzy questions. They are trying to run experiments. And a five-day workshop framework is a slow, expensive way to do that

The Growth Sprint™ borrows the compressed timeline from the design sprint but replaces the prototype-and-test structure with something built for experimentation. The output is not a concept. It is a live test.

What a Design Sprint Actually Is

If you have not encountered it before, a design sprint is a structured five-day process.

- Day one, you map the problem.

- Day two, you sketch solutions.

- Day three, you decide what to build.

- Day four, you build a prototype.

- Day five, you test it with real users.

Jake Knapp created it at Google Ventures and wrote a whole book about it. The core idea is good. You compress months of back-and-forth into one week by forcing decisions and keeping the group small.

Where it breaks down for growth teams is the prototype step. A prototype tells you if people understand a concept. It does not tell you if they will convert. Usability and conversion are related but not the same thing. Someone can navigate your checkout prototype perfectly and still abandon the real one.

CRO teams need behavioural data from real traffic. Not simulated sessions with five recruited participants.

What a Growth Sprint Is Instead

The Growth Sprint is a compressed experimentation cycle. The goal is to go from problem to live experiment in roughly two weeks, without skipping the diagnostic work that makes experiments worth running.

Here is how the framework sits together.

Day one

You start with a specific problem, not a vague brief. Conversion is dropping on the pricing page. Activation rate is low for users who sign up via paid search. Something specific with a number attached. Then you pull the evidence: session recordings, funnel data, heatmaps, any existing research. You are not brainstorming solutions yet. You are building a case.

The case becomes a hypothesis. A real one, structured as: if we change X for audience Y, we expect to see Z, because of W. The “because” is what most teams skip. Without it, you are just guessing with extra steps.

Day two

Is about execution. Brief goes to design and dev. QA follows. The experiment is live by the end of the day. Two days from problem to running test.

That is the framework. But the reason it works isn’t the timeline.

Why Faster Does Not Mean Sloppier

Most teams run slow experiments for one of two reasons. Either the diagnostic work takes forever because there is no process for it or the development work takes forever because experiments are not treated as first-class work. Those development bottlenecks are real.

The Growth Sprint™ fixes both by making them sequential and time-boxed.

The diagnostic section has a hard stop. You don’yt keep pulling data until you feel confident. You pull data until the time is up, then you make a call with what you have. This sounds uncomfortable. It’s supposed to be. The discomfort is what forces you to prioritise the evidence that actually matters instead of collecting more.

This is one of my more contrarian positions on CRO. The teams that run the most experiments rarely have the most data. They have a clear enough picture to make a directional call and they move. The teams that are drowning in dashboards are often using data as a way to avoid committing to anything.

The execution day works the same way. The brief is locked. Because changes that come later delay the sprint – and this is probably what’s effected your program so far. That rule sounds harsh until you realise how many experiments die in the gap between “let’s do it” and “let’s just tweak this one more time.”

What the Growth Sprint™ Protects Against

There is a version of CRO that is basically theatre. Teams run experiments to feel like they are being scientific. They celebrate wins loudly and explain losses away quietly. After a year of this, they have a long list of “winning” experiments and a conversion rate that hasn’t moved.

The Growth Sprint™ is structured specifically to make that harder to do.

Because the hypothesis requires a “because,” you are on record about why you think the change will work. If the experiment loses, you now have information. Either your diagnosis was wrong, your solution did not address what you found or the thing you found does not actually drive conversion. Any of those is useful. That is the point.

Losses are not failures in this framework. A loss with a clear hypothesis tells you something. A win without one tells you almost nothing. You cannot repeat what you do not understand.

The other thing the Growth Sprint™ protects against is the obsession with layout changes. Redesigning a page is visible. It feels like progress. But in my experience, copy changes move the needle more often and more significantly than layout changes. A pricing page that leads with the wrong value proposition will not be fixed by moving the CTA button. The Growth Sprint diagnostic week forces you to look at what the page is saying before you touch how it looks.

Running a Growth Sprint™ with a SaaS Team

SaaS companies are a good fit for this framework because they usually have the traffic data, the dev capacity, and the continuous product cycle that makes rapid experimentation possible. What they often lack is the process to connect those things.

The common version of this problem:

- growth team identifies an issue

- writes a ticket

- ticket sits in the backlog for six weeks

- eventually ships a change without a control

- learns nothing

The Growth Sprint™ doesn’t fix a broken engineering process on its own. But it does give you something concrete to point at when you are arguing for dedicated experiment capacity. Two weeks per sprint, eight sprints per quarter per stream, that is a real roadmap. It is easier to resource something you can put on a calendar.

For SaaS specifically, the diagnostic day works well when you segment by acquisition source from the start. Users who arrive from a paid search campaign behave differently to users who come from a product review site. If you hypothesis is not written for a specific segment, you will often get a null result even when the change is right, just aimed at the wrong audience.

The other thing SaaS teams get wrong is scope. A Growth Sprint™ is not the right container for a full onboarding redesign. It is the right container for testing whether changing the empty state on the dashboard improves day-three retention. Specific problem, specific change, specific metric. If you can’t say it in one sentence, the sprint is too big.

The Difference That Actually Matters

A design sprint answers, “Is this idea worth building?”

A Growth Sprint answers, “Does this change move this metric for this audience?”

Both questions are legitimate. They just belong in different moments of a company’s life. Early-stage, pre-product-market-fit, the design sprint question is the right one. Post-PMF, with traffic and a functioning funnel, the Growth Sprint question is what you need.

The mistake most teams make is running design sprint energy on a growth sprint problem. Long workshops, lots of opinions, a prototype that feels good, and no data at the end of it. Months later, still guessing.

Compress the cycle. Commit to the hypothesis. Ship the test. Read the result. Go again.

That is it. Everything else is detail.

Run Your First Growth Sprint™ This Week

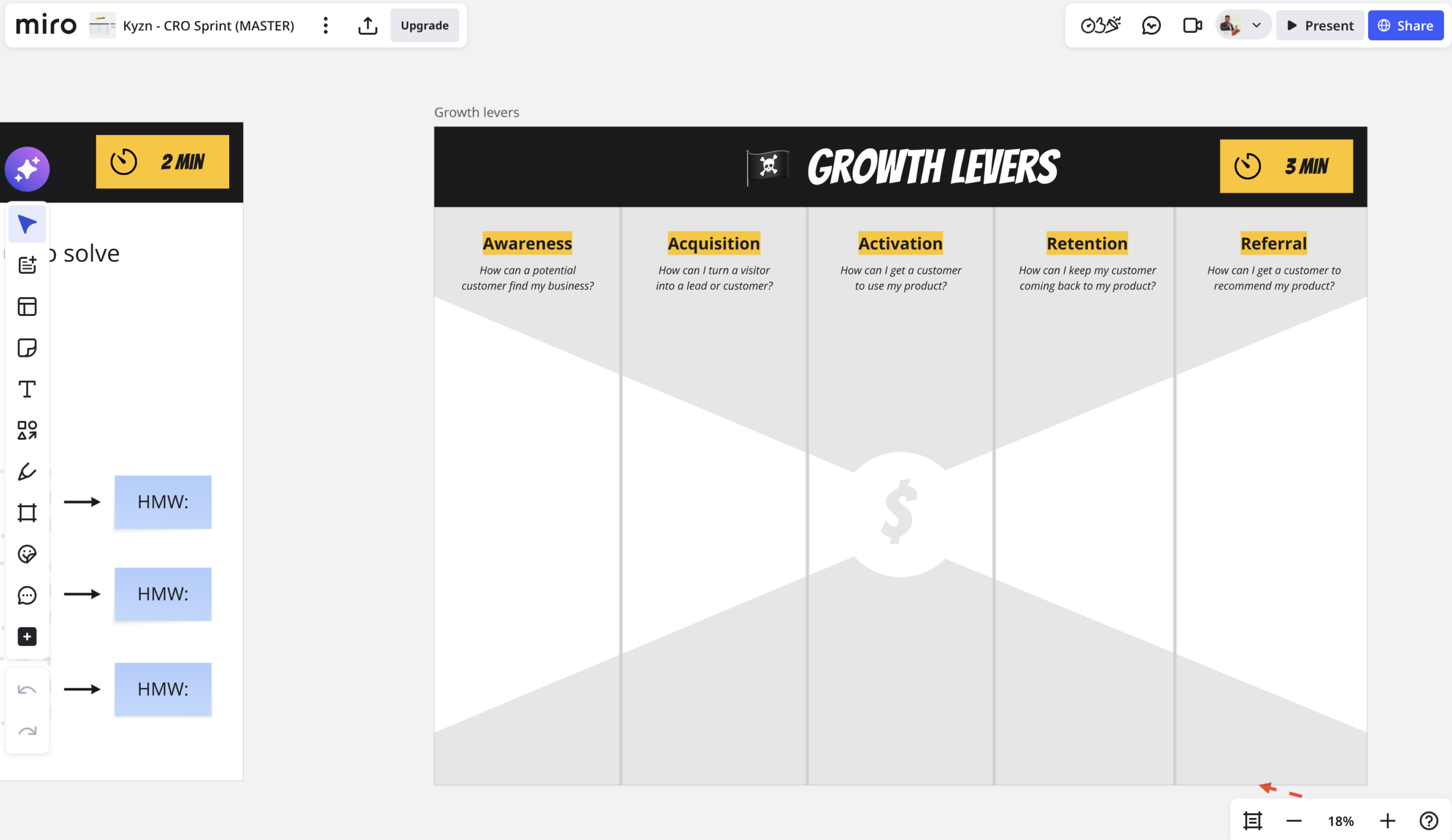

I built a Miro template that walks you through the full two-week cycle. Diagnostic framework, hypothesis builder, brief structure, QA checklist. Everything you need to run a Growth Sprint with your team without starting from a blank board.

If you want the template, I’m sharing it for free. It’s coming soon.

And if you want to pressure-test your hypothesis before the sprint starts, the Experiment Validator will tell you where it is weak before you waste a sprint finding out the hard way.