When I looked at their setup, the problem was obvious. They were testing before they understood anything. No clear conversion goal. Analytics tracking a different funnel than the one users actually went through. Copy that hadn’t been touched in two years. They were optimising a broken system, just optimising it slightly differently each week.

That’s the most common CRO mistake I see. Not running bad tests. Running tests before you’ve done the audit that tells you where the real problem is.

So here’s the conversion rate optimization checklist I run through before a single test gets designed. This is a pre-experiment audit, not a testing roadmap. The distinction matters.

Start With Your Conversion Goal (You’d Be Surprised)

Before anything else, I ask what’s the one conversion event that matters most to this business right now? Not a list. One thing. The single metric that matters.

Most teams can’t answer that cleanly. They say “sign-ups” but mean “paid conversions.” They say “demo requests” but actually care about SQL volume. The fuzziness in the answer tells you everything about why their experiments keep producing inconclusive results. You can’t optimise toward a target you haven’t agreed on.

Get the primary conversion goal in writing. Make sure the analytics platform is tracking it. Make sure the test platform will measure it. Now read that again. That’s the foundation. Without it, nothing else on this list matters.

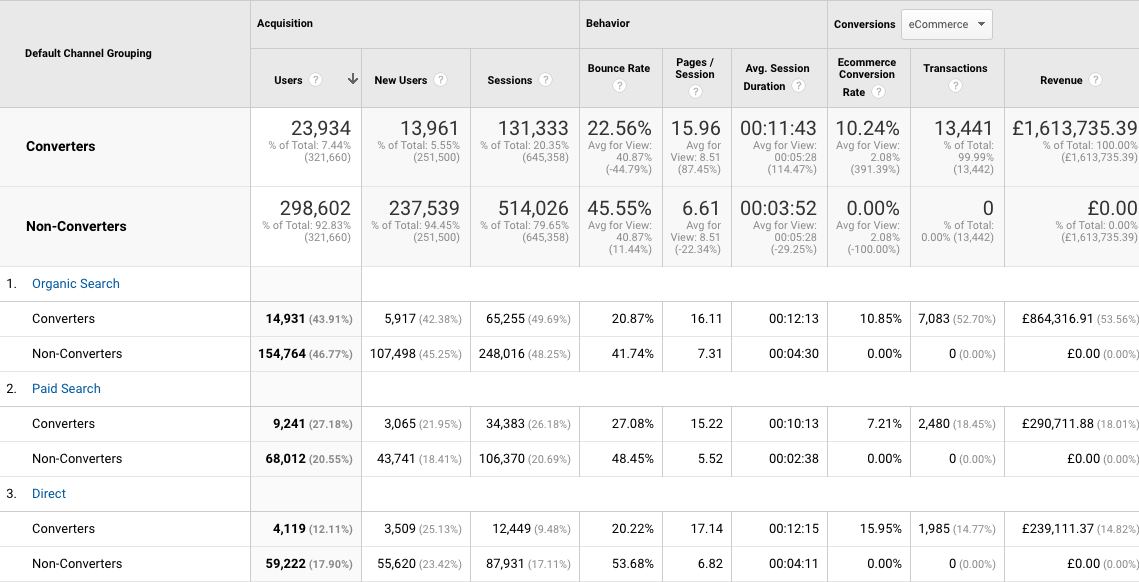

Audit Your Analytics Before You Trust Any Number

This one gets skipped constantly because it can feel like someone else’s responsibility. People look at a 2.3% conversion rate and start generating hypotheses. But if your tracking is broken, that number is fiction.

Check that your conversion event fires once per conversion, not on every page load. Check that your traffic sources are attributed correctly. If “direct” traffic is suspiciously high, that’s usually a UTM problem and it means you don’t actually know where your users are coming from. Check that your funnel steps match how users move through your site in reality, not how you assumed they would when you set it up eighteen months ago.

I’ve seen analytics setups where the checkout confirmation page was firing the purchase event three times per transaction. The conversion rate looked incredible. The actual business results told a completely different story.

Fix the tracking first. Form hypotheses second.

Pull Your Qualitative Data

Quantitative data tells you where people drop off… the what. Qualitative data tells you why. You need both before a test is worth running.

Session recordings come first. Spend two hours watching real users on your highest-traffic pages. You will see things that no heatmap or funnel report will ever surface. Users reading a pricing page then scrolling back up to find something specific. Users hovering over a CTA and then leaving. Users clicking on things that aren’t clickable. These moments are hypotheses you couldn’t have invented at a whiteboard.

Then go through your on-site survey data if you have it. If you don’t, set one up. A single question on your pricing page asking “what’s stopping you from signing up today?” will give you more test ideas in a month than a year of analytics review. Real objections from real users. That’s the raw material.

User interviews are the gold standard. Even five conversations with recent sign-ups and five with people who visited but didn’t convert will restructure your entire understanding of the problem.

Review Your Copy Before You Touch a Single Layout

This is the one most teams ignore completely. Writing changes outperform layout changes at a rate that would genuinely surprise most CRO practitioners. But the industry is obsessed with redesigns and component shuffling, so copy sits untouched for years while the button placement gets A/B tested to death.

Read your homepage headline out loud. Does it say what you actually do? Or does it say something like “Empowering teams to unlock their potential”? If a stranger read it and couldn’t tell you what the product is, that’s a conversion problem. Not a design problem. A writing problem.

Check your CTA copy. “Get Started” is not a CTA. It’s a placeholder that never got replaced. “Start your free 14-day trial” is a CTA. It tells the user exactly what happens next. That specificity reduces anxiety. Reduced anxiety increases conversion. Simple.

Check your value proposition on every major landing page, not just the homepage. Each page a user might land on from paid search or organic is doing its own job. If the copy doesn’t match the intent of that specific visitor, you’re losing conversions before any UX issue even comes into play.

Map the Actual User Journey

Not the journey you designed. The journey users actually take.

Pull your top traffic pages and your top exit pages. Find the gaps. Where do people arrive from paid traffic? Where do they go next? Where do they leave? There’s almost always a page in the middle of that flow that nobody has looked at in a long time because it wasn’t considered important. That page is often the leak.

For SaaS, look specifically at the onboarding flow after sign-up. A lot of CRO work focuses on getting the sign-up. The drop-off between sign-up and activation is where the real conversion problem often lives. If users sign up and don’t come back, your top-of-funnel conversion rate is meaningless. You’re filling a bucket with a hole in it.

Check Your Technical Performance

Page speed is a conversion factor. Not a technical nicety. A conversion factor.

Run your key landing pages through PageSpeed Insights. If your mobile score is below 50, that is worth fixing before you run any test on that page. A slow page doesn’t just frustrate users. It affects the test results themselves, because some users leave before the test variant even loads. You end up measuring the wrong thing.

Also check your pages on actual mobile devices. Not just resized browser windows. Real phones. You’ll often find form fields that are too small to tap, modals that don’t close properly, or navigation that covers the CTA on certain screen sizes. These are not optimisation opportunities. These are bugs. Fix them before you run experiments on top of them.

Assess Your Traffic Volume (Honestly)

Not every company needs CRO. Some need something entirely different.

If you have fewer than 1,000 monthly visitors hitting your key conversion page, you don’t have enough traffic to run statistically valid A/B tests. You’ll be waiting months for results that still won’t be conclusive. In that situation, the right move is to focus on traffic acquisition, direct sales conversations or copy rewrites you ship and monitor directionally, not split tests you run to significance.

Use a sample size calculator before you build a test plan – there are loads out there. Input your current conversion rate and your minimum detectable effect. The result will tell you how many visitors you need per variant. If that number is higher than what you actually get in a reasonable timeframe, the test just isn’t viable. That’s useful information. It means your resource goes somewhere else.

Define What a Loss Means Before You Start

The reason we run experiments is because we don’t know the answer. That means losses are not failures. They’re findings. But only if you’ve decided in advance what you’ll do with the information.

Before any test is built, document the hypothesis, the metric, the minimum detectable effect and the decision rule. If variant B loses by more than 10% relative, what happens? If the result is flat, what does that tell you about the hypothesis? These decisions should be made before the data comes in, not after. Post-hoc rationalisation is the thing that turns a CRO programme into theatre.

The audit doesn’t end when you start testing. It informs every test you run. Go through this checklist once properly and you’ll run fewer experiments. The ones you do run will actually teach you something.

Check out the detailed 12 step process for auditing your website. And if you want help structuring a specific experiment before you run it, use the Experiment Validator. It walks you through hypothesis quality, sample size, and decision rules before a single line of test code gets written.

2 Comments